Previous

Problems Viam Solves

Goal: Get a computer vision pipeline working.

Skills: Connect a machine to Viam, configure components in the Viam UI, use fragments to add preconfigured services.

Time: ~10 min

Before starting this tutorial, you need the can inspection simulation running. Follow the Gazebo Simulation Setup Guide to:

Once you see “Can Inspection Simulation Running!” in the container logs and your machine shows Live in the Viam app, return here to continue.

The simulation runs Gazebo Harmonic inside a Docker container. It simulates a conveyor belt with cans (some dented) passing under an inspection camera. viam-server runs on the Linux virtual machine inside the container and connects to Viam’s cloud, just like it would on a physical machine. Everything you configure in the Viam app applies to the simulated hardware.

If you followed the setup guide, your machine should already be online.

inspection-station-1)

Ordinarily, after creating a machine in Viam, you would download and install viam-server together with the cloud credentials for your machine. For this tutorial, we’ve already installed viam-server and launched it in the simulation Docker container.

Your machine is online but empty. To configure your machine, you will add components and services to your machine part in the Viam app. Your machine part is the compute hardware for your robot. This might be a PC, Mac, Raspberry Pi, or another computer.

In the case of this tutorial, your machine part is a virtual machine running Linux in the Docker container.

Find inspection-station-1-main in the CONFIGURE tab.

You’ll now add the camera as a component.

To add the camera component to your machine part:

gz-cameragz-camera:rgb-camerainspection-cam for the nameTo configure your camera component to work with the camera in the simulation, you need to specify the correct camera ID. Most components require a few configuration parameters.

In the ATTRIBUTES section, add:

{

"id": "/inspection_camera"

}

Click Save in the top right

You declared “this machine has a camera called inspection-cam” by editing the configuration in the Viam app. When you clicked Save, viam-server loaded the camera module, added a camera component, and made the camera available through Viam’s standard camera API. Software you write, other services, and user interface components will use the API to get the images they need. Using the API as an abstraction means that everything still works if you swap cameras.

Verify the camera is working. Every component in Viam has a built-in test card right in the configuration view.

inspection-cam selectedThe camera component test card uses the camera API to add an image feed to the Viam app, enabling you to determine whether your camera is working. You should see a live video feed from the simulated camera. This is an overhead view of the conveyor/staging area.

Your camera is working. You can stream video and capture images from the simulated inspection station.

Now you’ll add machine learning to run inference on your camera feed. You need two services:

Instead of adding each service manually, you’ll use a fragment. A fragment is a reusable block of configuration that can include components, services, modules, and ML models. Fragments let you share tested configurations across machines and teams.

The try-vision-pipeline fragment includes an ML model service loaded with a can defect detection model and a vision service wired to that model. The fragment accepts a camera_name variable so it works with any camera.

try-vision-pipelineThe fragment needs to know which camera to use for inference.

In the fragment’s configuration panel, find the Variables section

Set the camera_name variable to inspection-cam

{

"camera_name": "inspection-cam"

}

Click Save in the upper right corner

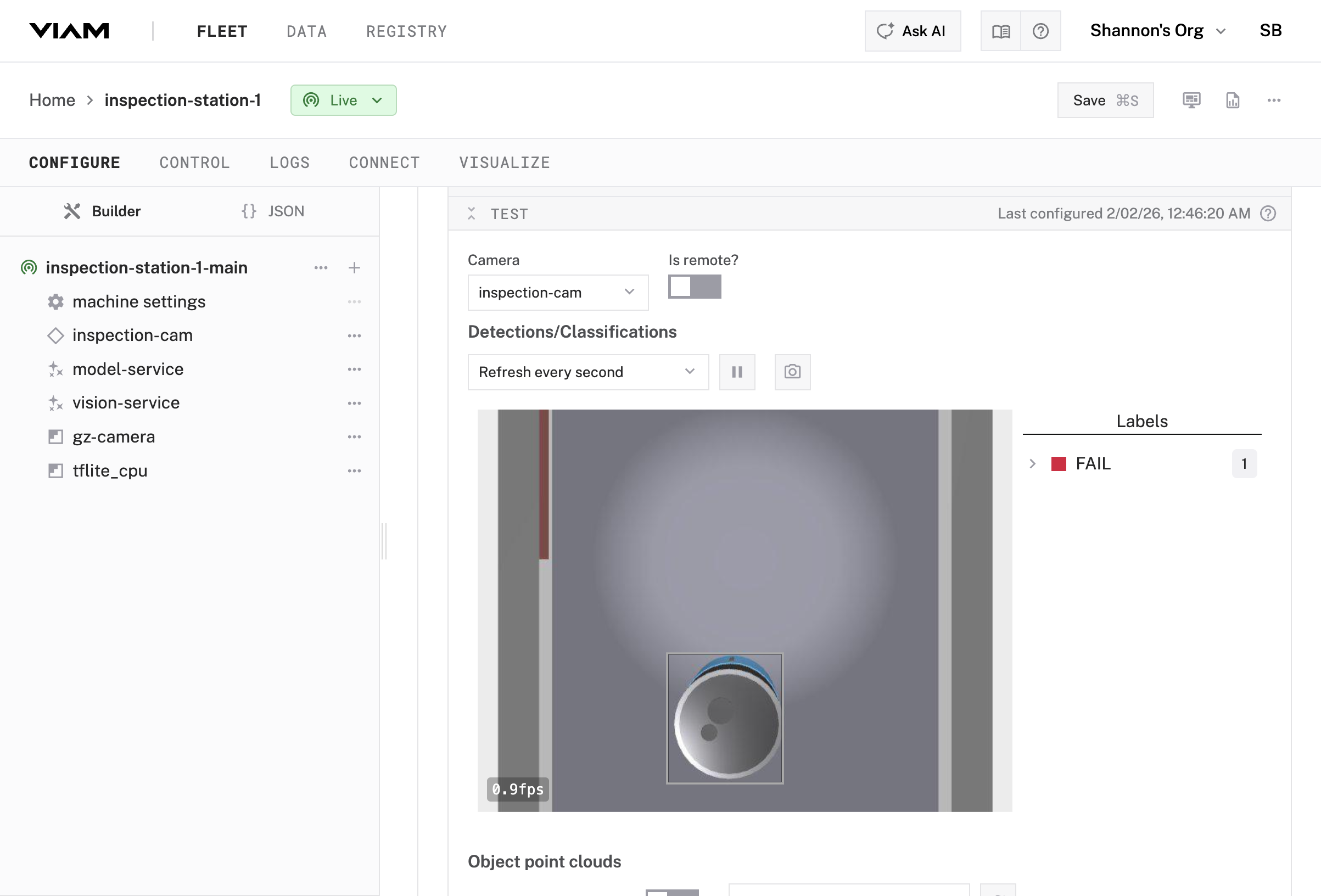

The fragment added two services and their dependencies to your machine:

can-defect-detection model from the Viam registry. This model classifies cans as PASS or FAIL.The fragment also added the TFLite CPU module and the ML model package. Everything is wired together and ready to use.

This fragment works with any camera. If you were using a USB webcam instead of the simulation camera, you’d set camera_name to whatever you named your webcam component. The ML pipeline stays the same. This is how fragments enable reuse across different hardware setups.

vision-service configuration panelinspection-cam as the camera sourceLive

A complete ML inference pipeline. The vision service grabs an image from the camera, runs it through the TensorFlow Lite model, and returns structured detection results. This same pattern works for any ML task: object detection, classification, segmentation. Swap the model and camera, and the pipeline still works.

You added a camera component manually and used a fragment to add a complete ML vision pipeline. The system can detect defective cans. Next, you’ll set up continuous data capture so every detection is recorded and queryable.

Everything you configured through the UI is stored as JSON. Click JSON in the upper left of the Configure tab to see the raw configuration. You’ll see your camera component, the fragment reference, and how the fragment’s services connect to your camera. As configurations grow more complex, the JSON view helps you understand how components and services connect.

Continue to Part 2: Data Capture →

Was this page helpful?

Glad to hear it! If you have any other feedback please let us know:

We're sorry about that. To help us improve, please tell us what we can do better:

Thank you!